Masden test highlight

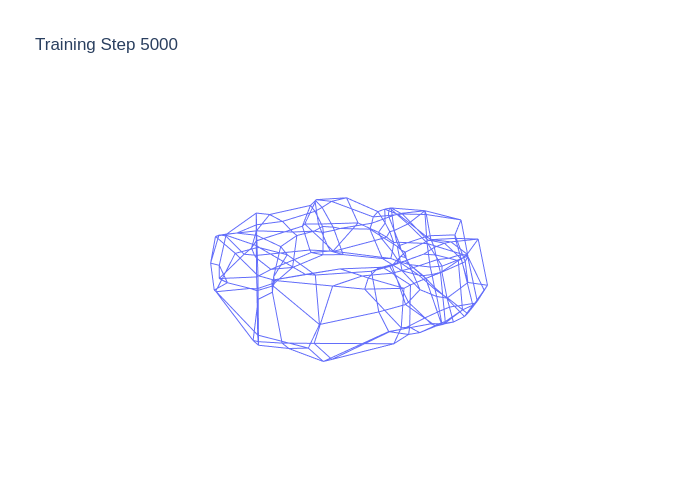

Identifying the capabilities of a machine learning model before applying it is a key goal in neural architecture search. Too small of a model, and the network will never successfully perform its task. Too large of a model, and computational energy is wasted and the cost of model training may become unbearably high. While universal approximation theorems and even dynamics are available for various limiting forms of ReLU neural networks, there are still many questions about the limitations of what small neural networks of this form can accomplish. Marissa Masden seeks to understand ReLU neural networks through a combinatorial, discrete structure that enables capturing topological invariants of structures like the decision boundary.

test repreating gif

ICERM alumna Marissa Masden seeks to understand ReLU neural networks through a combinatorial, discrete structure that enables capturing topological invariants of structures like the decision boundary, the \(F(x)= 0\) level set—one way to characterize the classification capability of a network model.

test mathjax

recent works analyze Anderson accelerated Newton's method applied to singular problems.

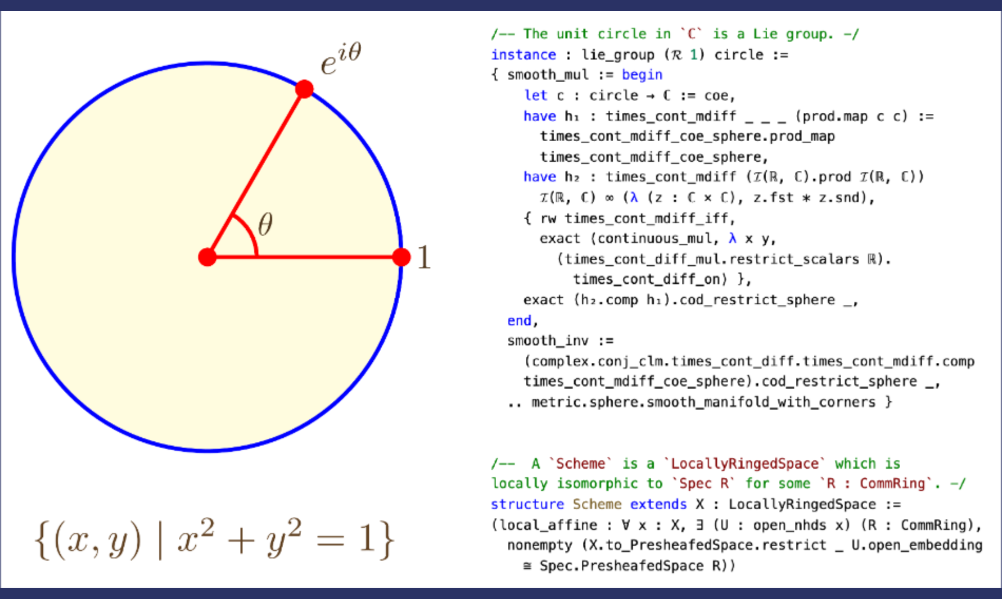

Lean for the Curious Mathematician 2022

Interactive theorem proving software can check, manipulate, and generate proofs of mathematical statements, just as computer algebra software can manipulate numbers, polynomials, and matrices. Over the last few years, these systems have become highly sophisticated, and have learnt a large amount of mathematics.

Gibbs measures and nonlinear wave equations

Gibbs measures are tools from probability that play a fundamental role in mathematics (partial differential equations (PDE), stochastic partial differential equations, etc.) and in physics (statistical mechanics, Euclidean field theories, etc).